A team of researchers from MIT, Worcester Polytechnic Institute, and Google has introduced a new method to reduce bias in vision-language models (VLMs), which are used in high-stakes applications like medical diagnosis. The study, accepted to the 2026 International Conference for Learning Representations, proposes Weighted Rotational DebiasING (WRING), an approach designed to address longstanding limitations of existing debiasing techniques.

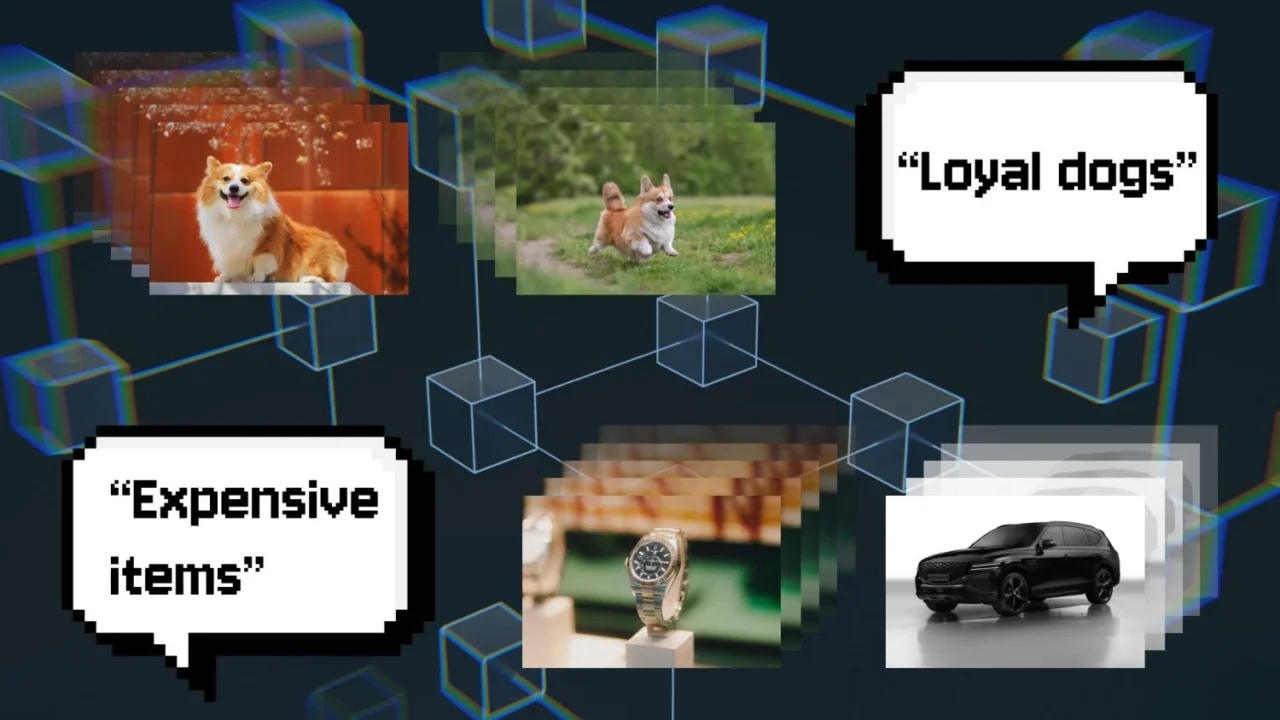

AI models, particularly in sensitive areas such as dermatology, often face bias challenges that can affect performance across different demographic groups. Traditional methods like projection debiasing attempt to remove biased information by eliminating the subspace representing that bias from the model’s embedding space. However, this can inadvertently alter other learned relationships and amplify different biases—a phenomenon researchers call the “Whac-A-Mole dilemma.” For example, removing racial bias in image retrieval could inadvertently increase gender bias.

WRING tackles this issue by rotating selected coordinates within the model’s high-dimensional embedding space associated with bias. This rotation disrupts the model’s ability to differentiate between groups on biased concepts while preserving its other relational structures. Importantly, WRING acts as a post-processing step that can be efficiently applied to pre-trained models without the need for retraining from scratch, saving considerable resources.

Walter Gerych, first author of the paper and now an assistant professor at Worcester Polytechnic Institute, explained that WRING “is very efficient” and “minimally invasive,” making it appealing for real-world use where training large AI models is expensive. The research team demonstrated that WRING significantly reduces bias for targeted concepts without causing the unintended emergence of new biases elsewhere.

Currently, WRING is primarily effective with Contrastive Language-Image Pre-training (CLIP) models—VLMs that associate images with text for classification or retrieval. Researchers aim to extend the method to generative language models like ChatGPT as a next step.

The work benefits from funding awards including the National Science Foundation CAREER Award and support from the Gordon and Betty Moore Foundation. The findings represent a promising development in making AI models safer and more equitable, particularly for applications in healthcare where bias can have serious consequences.

Why it matters

Bias in AI vision models can lead to inaccurate or unfair outcomes, especially in medical settings such as skin lesion classification. WRING’s ability to reduce bias without degrading overall model performance addresses a critical safety concern and could improve AI deployment in healthcare and beyond. Furthermore, its compatibility with pre-trained models enhances its practical potential for industry adoption.

Background

Vision-language models like OpenAI’s OpenCLIP combine visual and textual data to perform complex recognition and classification tasks. Existing debiasing methods often rely on projection to remove biased components from model embeddings, but this can shift biases or interfere with other learned relationships. The “Whac-A-Mole dilemma” highlights the difficulty of eliminating bias fully without unintended side effects, making the search for more precise debiasing approaches critical. WRING offers a novel geometric technique to target bias while maintaining the integrity of the model’s overall representation space.

Read more Science Discoveries stories on Goka World News.

Sources

This article is based on reporting and publicly available information from the following source: